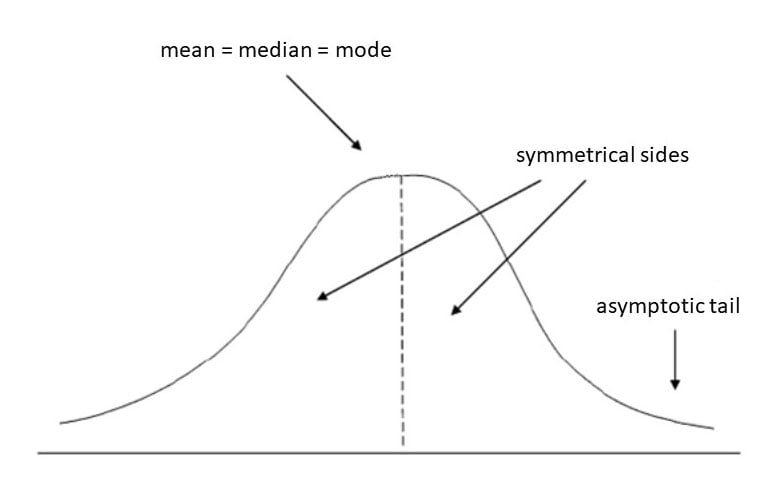

A normal distribution (or Gaussian distribution) is a symmetric, bell-shaped probability distribution where most data points cluster around a central mean, tapering off towards the tails.

Because it describes so many natural phenomena, from heights to test scores, it is the cornerstone of modern statistics.

Properties of normal distribution

A normal distribution is perfectly symmetrical, meaning a vertical line drawn through the center of the curve divides it into two identical mirror images.

Because of this perfect symmetry, the measures of central tendency, the mean, the median (middle value), and the mode (most frequent value), all fall at the exact same point in the center of the distribution.

Two specific parameters, the mean and standard deviation, dictate the curve’s geometry. These values provide all the information required to construct the distribution.

The mean acts as the location parameter. It determines the position of the peak along the horizontal axis. Shifting the mean moves the entire curve left or right without changing its width.

The standard deviation serves as the scale parameter. It measures the spread or “dispersion” of the data points. A low standard deviation produces a tall, narrow curve. Conversely, a high standard deviation results in a shorter, wider bell shape.

Because the normal distribution is a continuous distribution, probability is represented by the area under the curve. The total area under the entire bell curve is exactly equal to 1 (or 100%).

Most of the continuous data values in a normal distribution tend to cluster around the mean, and the further a value is from the mean, the less likely it is to occur.

The tails are asymptotic, which means that they approach but never quite meet the horizon (i.e., the x-axis).

The normal distribution is often called the bell curve because the graph of its probability density looks like a bell.

It is also known as called Gaussian distribution, after the German mathematician Carl Gauss who first described it.

Why is the normal distribution important?

The bell-shaped curve is a common feature of nature and psychology

The normal distribution is the most important probability distribution in statistics because many continuous data in nature and psychology display this bell-shaped curve when compiled and graphed.

For example, if we randomly sampled 100 individuals, we would expect to see a normal distribution frequency curve for many continuous variables, such as IQ, height, weight, and blood pressure.

Role in the Central Limit Theorem

The normal distribution is exceptionally powerful due to its connection to the Central Limit Theorem.

This mathematical theorem states that if you take large enough random samples (generally considered to be 30 or more observations) from any population and calculate their means, the distribution of those sample means will form a normal distribution.

This happens regardless of the shape of the original underlying population’s distribution.

How do mean and standard deviation change a bell curve’s shape?

Because there are an infinite number of possible normal distributions, these two mathematical measures are required to uniquely define any specific curve.

Here is how each parameter alters the bell curve:

Mean (μ): Shifts the Curve’s Location

The mean establishes the center point of the distribution. The bell curve is perfectly symmetrical around a vertical line drawn straight down through this mean.

If the standard deviation is kept constant but the mean is changed, the physical shape (the height and width) of the bell curve does not change at all.

Instead, the entire graph simply shifts its location to the left or the right along the horizontal x-axis.

Standard Deviation (σ): Alters the Curve’s Shape

The mean tells you exactly where the center of the bell curve sits on a graph, while the standard deviation tells you how tall and wide the bell will be.

The standard deviation measures the variability or spread of the data, indicating how far data values fall from the mean.

Because the total area under any continuous probability distribution curve must always equal exactly one (or 100%), changing the standard deviation directly dictates how “fat” or “skinny” the curve appears.

- Small Standard Deviation: When the standard deviation is small relative to the mean, it indicates that most data points are tightly concentrated and packed close to the center. To accommodate this lack of spread while keeping the total area at 1, the bell curve becomes taller, narrower, and more “pointy”.

- Large Standard Deviation: When the standard deviation is large relative to the mean, it indicates that the data values are widely dispersed and spread out away from the center. To account for this wide spread across the x-axis, the bell curve stretches out and becomes flatter, wider, and “fatter”.

Parametric significance tests require a normal distribution of the sample’s data points

The most powerful (parametric) statistical tests psychologists use require data to be normally distributed.

If the data does not resemble a bell curve, researchers may use a less powerful statistical test called non-parametric statistics.

Converting the raw scores of a normal distribution to z-scores

Because there is an infinite number of possible normal distributions (depending on the mean and standard deviation of any specific dataset), statisticians convert data into a standardized format called the Standard Normal Distribution.

The Standard Normal Distribution always has a mean of 0 and a standard deviation of 1.

We can standardize a normal distribution’s values (raw scores) by converting them into z-scores.

A z-score precisely measures the distance of a data point from the mean, expressed purely in units of standard deviation.

- A z-score of exactly 0 indicates that the raw score is perfectly equal to the mean.

- A positive z-score indicates that the raw score falls above (to the right of) the mean.

- A negative z-score indicates that the raw score falls below (to the left of) the mean.

- The absolute value of the z-score reveals exactly how far the score deviates from the center, regardless of the direction.

By converting raw scores into z-scores, statisticians can meaningfully compare observations from completely different distributions that rely on entirely different scales.

For example, standardizing scores allows researchers to compare a student’s performance on the SAT (which has a mean around 500) with another student’s performance on the ACT (which has a mean around 21) to objectively determine who performed better relative to their respective testing populations.

What is the empirical rule formula?

A highly useful property of a normal distribution is how predictably the data is dispersed around the mean.

Known as the Empirical Rule, or the 68-95-99.7 rule, it states that in any perfectly bell-shaped and symmetrical distribution:

- Approximately 68% of the data values lie within one standard deviation of the mean (μ±1σ).

- Approximately 95% of the data values lie within two standard deviations of the mean (μ±2σ).

- Approximately 99.7% (almost all) of the data values lie within three standard deviations of the mean (μ±3σ)

If the data values in a normal distribution are converted to standard score (z-score) in a standard normal distribution, the empirical rule describes the percentage of the data that fall within specific numbers of standard deviations (σ) from the mean (μ) for bell-shaped curves.

The empirical rule allows researchers to calculate the probability of randomly obtaining a score from a normal distribution.

68% of data falls within the first standard deviation from the mean. This means there is a 68% probability of randomly selecting a score between -1 and +1 standard deviations from the mean.

95% of the values fall within two standard deviations from the mean. This means there is a 95% probability of randomly selecting a score between -2 and +2 standard deviations from the mean.

99.7% of data will fall within three standard deviations from the mean. This means there is a 99.7% probability of randomly selecting a score between -3 and +3 standard deviations from the mean.

How to check data

Statistical software (such as SPSS) can be used to check if your dataset is normally distributed by calculating the three measures of central tendency.

If the mean, median, and mode are very similar values, there is a good chance that the data follows a bell-shaped distribution (SPSS command here).

It is also advisable to use a frequency graph too, so you can check the visual shape of your data (If your chart is a histogram, you can add a distribution curve using SPSS: From the menus, choose: Elements > Show Distribution Curve).

Normal distributions become more apparent (i.e., perfect) the finer the level of measurement and the larger the sample from a population.

You can also calculate coefficients which tell us about the size of the distribution tails in relation to the bump in the middle of the bell curve.

For example, Kolmogorov Smirnov and Shapiro-Wilk tests can be calculated using SPSS.

These tests compare your data to a normal distribution and provide a p-value, which, if significant (p < .05), indicates your data is different from a normal distribution (thus, on this occasion, we do not want a significant result and need a p-value higher than 0.05).

Is a normal distribution kurtosis 0 or 3?

A normal distribution has a kurtosis of 3. However, sometimes people use “excess kurtosis,” which subtracts 3 from the kurtosis of the distribution to compare it to a normal distribution.

In that case, the excess kurtosis of a normal distribution would be be 3 − 3 = 0.

So, the normal distribution has kurtosis of 3, but its excess kurtosis is 0.