The base-rate fallacy, also known as base-rate neglect, is a cognitive phenomenon where individuals ignore general statistical information in favor of specific, vivid details.

In psychology, base-rate information refers to the relative frequency of an event or attribute within a given population.

When people are presented with a general baseline and a specific description, they consistently underweight the math.

To reach an accurate conclusion, one should ideally use Bayes’ theorem, which is a mathematical formula used to update the probability of a hypothesis as more evidence is provided.

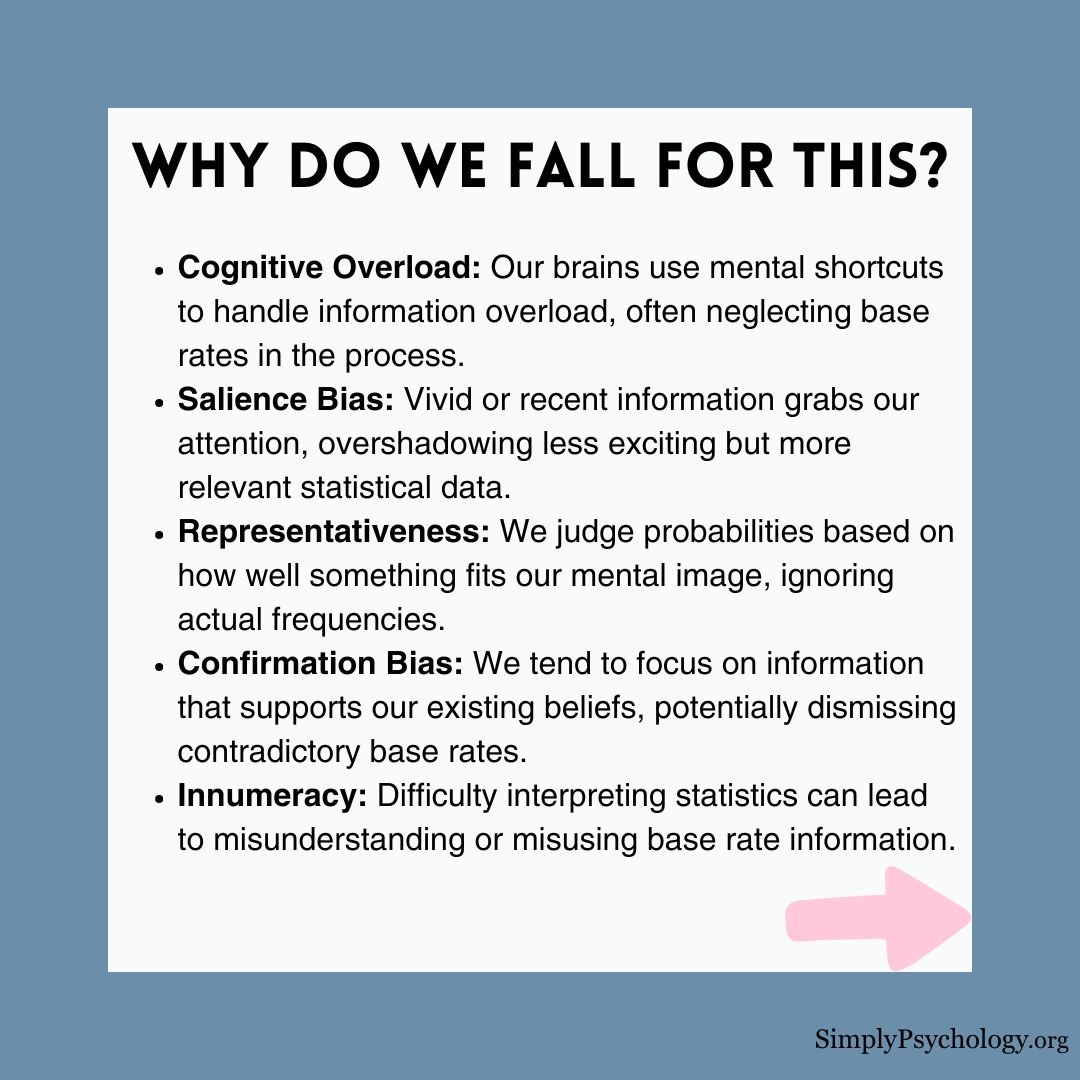

Why it happens

Several psychological frameworks explain why the human mind struggles with statistical logic. These range from how we categorize people to how our brains conserve energy.

The Representativeness Heuristic

Psychologists Daniel Kahneman and Amos Tversky argue that this error stems from the representativeness heuristic.

This is a mental shortcut where people judge probability based on how well a description matches a mental prototype or stereotype.

This can lead to errors in judgment, as people do not take the time to process all of the available information and weigh it up properly.

Dual-Process Thinking

This bias serves as a primary example of the tension between two distinct modes of human thought.

System 1 is our fast, automatic, and intuitive processing mode. In contrast, System 2 is our slow, analytical, and effortful mode of reasoning.

Base-rate neglect occurs when the “lazy” System 2 fails to monitor the flawed suggestions of System 1.

While System 1 leaps to conclusions based on vivid details, System 2 is required to perform the difficult work of statistical integration.

If no descriptive details are provided, people use base rates accurately.

However, the moment distracting details appear, analytical thinking is often abandoned.

The Natural Frequency Hypothesis

Evolutionary psychologists Gerd Gigerenzer and Ulrich Hoffrage suggest that humans are not “bad at math.”

Instead, they argue our brains evolved for natural sampling.

This is the process of encountering real-world instances one by one over time.

Our ancestors did not process abstract percentages or fractions.

Research shows that when base-rate problems use natural frequencies (e.g., “10 out of 100 people”) instead of percentages (e.g., “10%”), accuracy improves.

Even medical students perform better when data is presented as raw counts rather than abstract probabilities.

Examples

The base-rate fallacy is a decision-making error in which information about the rate of occurrence of some trait in a population (the base-rate information) is ignored or not given appropriate weight.

The fallacy often appears in our daily assumptions about the people we encounter.

-

The Subway Reader: If you see someone reading The New York Times on a subway, you might guess they have a PhD. However, statistically, there are vastly more people without college degrees on the subway than people with PhDs.

- The “Californian” Student: You might meet a blond, “mellow” student named Brian who loves the beach. While he fits a California stereotype, if the university has a much higher base rate of New Yorkers, it is statistically wiser to guess Brian is from New York.

-

Steve the Librarian: Steve is described as a “meek and tidy soul.” While this fits the librarian stereotype, there are significantly more male farmers in the population than male librarians.

Empirical Validation: Classic Experiments

The following studies demonstrate how this fallacy persists even when participants are given the correct statistical data.

1. The Taxi-Cab Problem (Kahneman & Tversky, 1972)

-

Aim: To observe how participants weigh eyewitness testimony against known population statistics.

-

Procedure: Participants imagined a city where 85% of cabs are Green and 15% are Blue. A witness identified a hit-and-run cab as “Blue.” The witness was tested to be 80% accurate.

-

Findings: Most participants estimated the probability the cab was Blue at roughly 80%. They focused almost entirely on the witness and ignored the 85% base rate of Green cabs.

-

Conclusions: People prioritize specific evidence over base rates. According to Bayesian logic, the true probability the cab was Blue is actually only 41%.

2. The Student Stereotype (Tom W.) (Kahneman & Tversky, 1973)

-

Aim: To test if people prioritize descriptive stereotypes over known population statistics.

-

Procedure: Participants read a description of “Tom W,” a student described as “dull,” “neat,” and “tidy.” Researchers explicitly informed them that 80% of the student body studied humanities.

-

Findings: Over 95% of participants guessed Tom was a computer science major. Their guesses remained unchanged despite the overwhelming base-rate advantage of humanities students.

-

Conclusions: The description matched the computer scientist stereotype so perfectly that participants abandoned statistical logic.

3. Medical Diagnoses and Causal Context (Krynski & Tenenbaum, 2007)

-

Aim: To investigate if providing a causal explanation helps people utilize base rates.

-

Procedure: Participants judged the likelihood of cancer after a positive mammogram. One group received only the statistics. A second group was told that “dense but harmless cysts” could also cause a positive result.

-

Findings: Accuracy jumped from 8% to 46% when the alternative causal explanation (the cyst) was provided.

-

Conclusions: People neglect base rates when the relevance is unclear. They use them more effectively when the data maps to their intuitive causal knowledge.

-

Use Frequencies: Always translate percentages into raw counts to help visualize the actual numbers involved.

-

Identify Causes: Look for alternative explanations for the evidence that might explain the specific details you are seeing.

-

Think Like a Statistician: Deliberately engage System 2 by slowing down and questioning the “story” you are telling yourself.

-

Assess Evidence Quality: Recognize that if specific details are flimsy or stereotypical, you should let the base rate dominate your decision.

The Influence of Causal Context

Not all base rates are ignored equally.

The human mind is “hungry for causal stories,” meaning we prefer information that explains why something happened.

Statistical vs. Causal Base Rates

Kahneman and Tversky distinguished between two types of data. Statistical base rates are mere facts about a population that lack a narrative.

These are generally underweighted.

However, causal base rates change our view of how an individual case came to be.

For example, if you hear that 80% of accidents involve “Company A” drivers because they are reckless, you are more likely to use that data than a dry statistic.

The Role of Missing Context

Researchers Krynski and Tenenbaum argue that people ignore base rates primarily when their relevance is not explicitly clear.

Study: The False Positive Scenario (Krynski & Tenenbaum, 2007)

-

Aim: To see if providing a causal explanation helps people use base-rate information.

-

Procedure: Participants judged the likelihood of cancer after a positive mammogram. One group was given just the statistics. Another group was told that “harmless cysts” can cause false positives.

-

Findings: Without the “cyst” explanation, only 8% used the base rate correctly. With the causal explanation, accuracy jumped to 46%.

-

Conclusions: Humans are better at statistical reasoning when they have an intuitive causal model to follow.

Conclusion: Bounded Rationality

While the base-rate fallacy leads to errors, it does not mean humans are fundamentally irrational.

Instead, we operate under bounded rationality, which is the idea that we make the best possible decisions given our cognitive limits.

Heuristics like representativeness are highly efficient in a complex world.

We only see their flaws in specific, laboratory-designed scenarios where abstract logic is prioritized over practical, everyday intuition.

References

Bar-Hillel, M. (1980). The base-rate fallacy in probability judgments. Acta Psychologica, 44 (3), 211-233.

Barbey, A. K., & Sloman, S. A. (2007). Base-rate respect: From ecological rationality to dual processes. Behavioral and Brain Sciences, 30 (3), 241-254.

Bar-Hillel, M. (1983). The base rate fallacy controversy. In Advances in Psychology (Vol. 16, pp. 39-61). North-Holland.

Gigerenzer, G., & Hoffrage, U. (1995). How to improve Bayesian reasoning without instruction: Frequency formats. Psychological Review, 102(4), 684–704.

Heller, R. F., Saltzstein, H. D., & Caspe, W. B. (1992). Heuristics in medical and non-medical decision-making. The Quarterly Journal of Experimental Psychology Section A, 44 (2), 211-235.

Kahneman, D. (2011). Thinking, fast and slow. Farrar, Straus and Giroux.

Kahneman, D., Slovic, S. P., Slovic, P., & Tversky, A. (Eds.). (1982). Judgment under uncertainty: Heuristics and biases. Cambridge university press.

Kahneman, D., & Tversky, A. (1972). Subjective probability: A judgment of representativeness. Cognitive psychology, 3 (3), 430-454.

Kahneman, D., & Tversky, A. (1973). On the psychology of prediction. Psychological review, 80 (4), 237.

Koehler, J. J. (1996). The base rate fallacy reconsidered: Descriptive, normative, and methodological challenges. Behavioral and brain sciences, 19 (1), 1-17.

Krynski, T. R., & Tenenbaum, J. B. (2007). The role of causal models in reasoning under uncertainty. Psychological Science, 18(5), 430–436.

Macchi, L. (1995). Pragmatic aspects of the base-rate fallacy. The Quarterly Journal of Experimental Psychology, 48 (1), 188-207.

Tversky, A., &l Kahneman, D. (1973). Availability: A heuristic for judging frequency and probability. Cognitive psychology, 5(2), 207-232.

Tversky, A., & Kahneman, D. (1974). Judgment under uncertainty: Heuristics and biases. Science, 185(4157), 1124–1131.